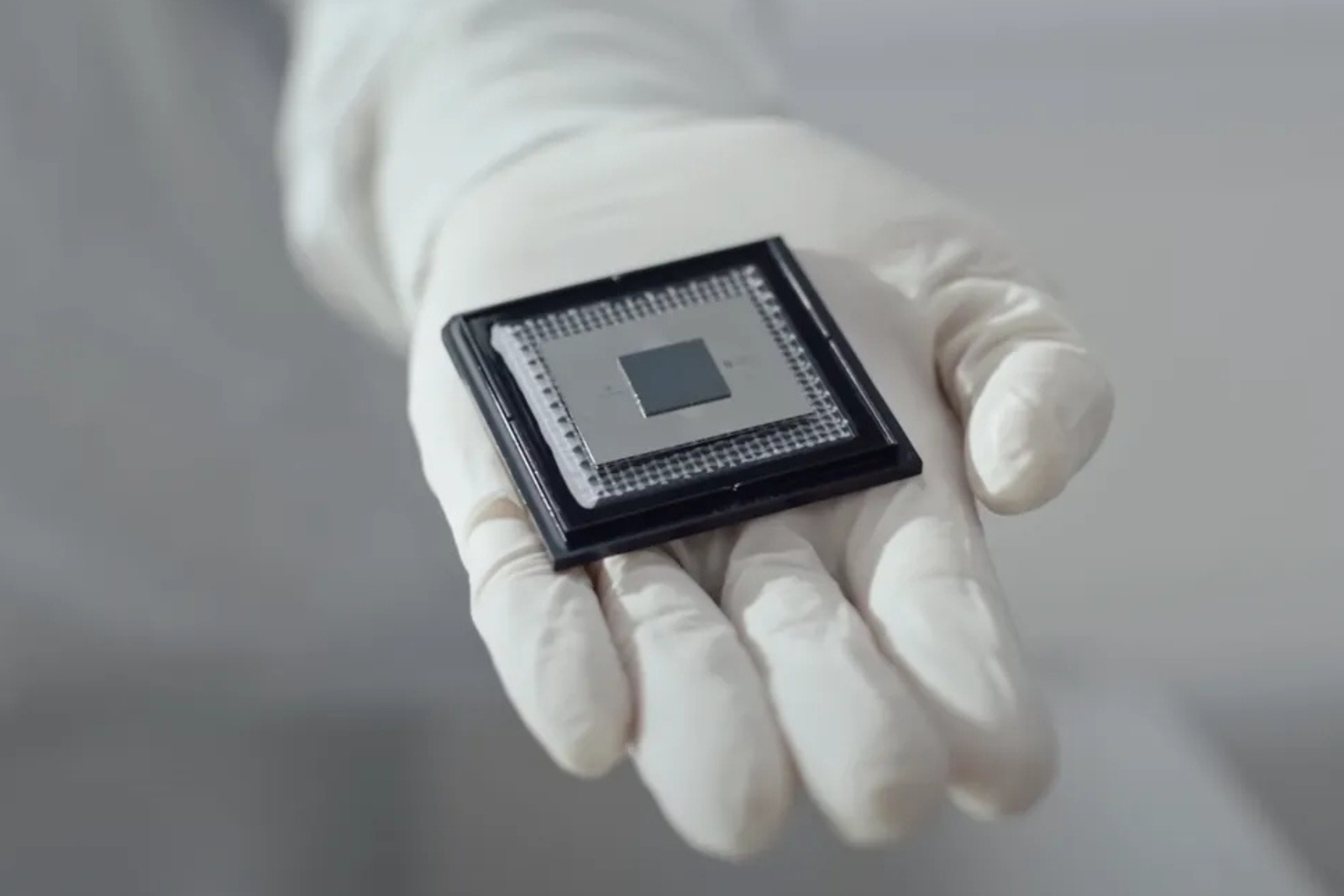

- Google unveiled its latest quantum processor, Willow, which features 105 qubits and represents a significant leap in performance over previous generations.

- Google Quantum AI founder Hartmut Neven suggested the chip’s results support interpretations of quantum mechanics that involve parallel outcomes, often linked to multiverse theory.

- The speculation focuses on the idea that quantum computation may effectively operate across multiple possible states at once, rather than completing calculations sequentially.

- Despite the speculation, Willow remains a research-focused system, with quantum computing still far from practical consumer or commercial use.

When Google unveiled its newest quantum chip, Willow, it marked another meaningful step in the company’s ongoing effort to turn quantum computing from a theoretical concept into a practical tool. Willow is Google’s fourth-generation quantum processor, and early results suggest it performs far beyond its predecessors, particularly as computational problems become more complex. Rather than becoming less reliable under pressure, the chip seems to become more stable and effective, addressing one of the biggest challenges that have held back quantum computing for years.

What truly pushed Willow beyond the tech industry, however, wasn’t just its performance improvements or the market reaction following the announcement. It was a comment from Hartmut Neven, who stated that the chip’s behaviour “lends credence to the notion that quantum computation occurs in many parallel universes, in line with the idea that we live in a multiverse.” Hang on a second, what was that again? Yup, it will take a second to sink in. But for anyone outside quantum physics, that remark falls somewhere between fascinating and baffling, and it’s no surprise it generated headlines, debate, and a fair amount of scepticism.

The company is still in the research stage, tackling significant theoretical and engineering challenges to make quantum computing dependable and scalable. Google isn’t claiming to have proven the existence of a multiverse. Even among scientists, much remains unclear, which makes this field particularly hard to explain simply. If the discussion feels confusing, that’s part of the process. What matters is understanding what Willow actually demonstrates, what it doesn’t, and why a single comment was enough to bring quantum computing into a much bigger debate about the nature of reality itself.

A quantum chip is designed to process information differently from the chips in everyday devices. Traditional computers use bits that are either on or off, whereas quantum chips use qubits, which can exist in multiple states at once. This enables a quantum system to explore multiple possible outcomes simultaneously instead of doing them one by one.

In practical terms, this means quantum chips are made for problems that are too complex for regular computers to solve efficiently. They aren’t intended to replace laptops or smartphones but to address specialised challenges like advanced simulations, optimisation problems, and scientific research. While the science behind them is complex, the aim is clear: to solve certain issues faster and more effectively than current technology allows.

The computers we use every day are known as classical computers, and they have been evolving for decades. At their core, they depend on binary logic. Every piece of information is divided into bits, which act like tiny switches. Each bit can only be in one of two states at a time: 0 or 1. No matter how powerful your laptop feels, everything it does ultimately comes down to enormous networks of these on/off signals working step by step.

A quantum computer works very differently. Instead of bits, it uses qubits, which don’t have to immediately settle on 0 or 1. Thanks to a phenomenon called superposition, a qubit can represent multiple possible states at the same time. This is where quantum computing begins to feel strange, because it doesn’t behave in a way that matches everyday intuition. Rather than committing to a single outcome right away, the system works with probabilities.

Still confused? Yeah, it did us too, but here’s a better way to help you understand this better.

A simple example makes this easier to understand. Imagine a system with 2 bits, where each bit can only be 0 or 1. That results in 4 possible combinations in total. A classical computer has to check each combination one by one. If evaluating each option takes 1 second, the entire process takes 4 seconds to complete.

A quantum computer approaches the same task differently. With 2 qubits, it can handle all 4 combinations simultaneously instead of processing them one by one. Instead of taking 4 seconds, the system can produce a result in roughly the same 1-second timeframe. The real benefit becomes evident as the problem size increases.

As more qubits are added, the number of possible outcomes grows exponentially. A classical computer slows down as it processes each possibility one by one. A quantum computer gains its advantage by considering an enormous number of potential outcomes. That’s why scale is so important in quantum research, and why chips like Willow, which contains 105 qubits, mark a significant step forward.

When Willow was announced, Hartmut Neven explained that the chip completed a deliberately complex test, known as random circuit sampling, in just five minutes. In comparison, one of the world’s most advanced classical supercomputers would take an estimated 10,000,000,000,000,000,000,000,000 years to perform the same task. Neven noted that this timeframe “exceeds known timescales in physics and vastly exceeds the age of the universe.”

So why does any of this matter outside the lab? According to Google, the real promise of quantum computing lies in its ability to analyse extremely complex systems. Problems like climate modelling, drug discovery, and understanding diseases involve massive datasets and countless variables that classical computers struggle to process efficiently. Quantum computers could, in theory, handle far more data much more quickly and arrive at better-informed solutions.

It’s also important to note that more qubits do not automatically mean a better machine. Some companies have already developed quantum systems with over 1,000 qubits, but stability, error correction, and reliability are just as crucial as raw numbers. Willow isn’t the largest quantum computer in existence, but it marks a carefully managed step toward making quantum computation more practical and usable.

The multiverse idea entered the discussion as a way to explain how extraordinary quantum computing can look when compared to classical machines. When a system like Willow evaluates a vast number of potential outcomes at once, the question stops being about speed and starts becoming about mechanism. At that level, traditional explanations start to feel stretched, prompting the resurgence of more unconventional interpretations.

One line of thought, openly considered by Hartmut Neven, proposes that quantum computation might not be limited to a single outcome or even a single reality. In this perspective, a quantum computer efficiently investigates multiple potential realities simultaneously, with each outcome influencing the final result. This concept aligns with the many-worlds interpretation of quantum mechanics, where every possible outcome coexists rather than collapsing into one.

As we have noted, this remains theoretical. Willow hasn’t demonstrated the existence of parallel universes, nor provided evidence of communication beyond our physical reality. What its performance has revealed is how far quantum behaviour can extend our current models of physics. When a chip solves a problem in minutes that would take a classical supercomputer longer than the age of the universe, it naturally prompts scientists to question whether our existing frameworks are incomplete, rather than merely slow.

For now, it’s best to see Willow as a glimpse into where computing might go, not where it’s headed. Quantum computers are very different from consumer devices. They operate in highly controlled environments, isolated from outside interference and cooled to temperatures colder than deep space. Even the tiniest disturbance, from radio waves to stray radiation, can cause errors. That alone keeps quantum hardware within specialized labs rather than living rooms, and it’s a long way from appearing inside a smartphone or laptop.

There’s also the issue of practicality. While Willow’s performance is impressive, Google is still figuring out how to turn that raw ability into useful applications at scale. In simple terms, the company hasn’t yet managed to convert quantum power into broadly commercial tools. Most of the current progress focuses on stability, error correction, and control rather than immediate real-world deployment. That’s why this moment feels more like a scientific milestone than a technological rollout.

Google has updated its quantum roadmap to show steady progress toward a large, error-corrected quantum system, which many see as the true milestone for practical application. There’s much more to this subject than any single article can cover, and anyone interested would benefit from following the research more closely.

For now, the main point is straightforward. Quantum computing is advancing, questions are becoming more complex, and while we’re not close to a quantum-powered future, the direction of travel is increasingly difficult to ignore.